Most engineering dashboards have the same problem: everything is green, and delivery still feels broken.

Deployment frequency is up. Code coverage looks fine. PRs are being merged at a healthy pace. And yet features are late, senior engineers are burned out, and nobody can explain why the roadmap keeps slipping.

The dashboard did not fail you. You measured the wrong things, or the right things in the wrong way.

Software engineering metrics are one of the most powerful tools an engineering leader has. Used well, they reveal bottlenecks before they become crises, align engineering performance with business outcomes, and give teams a shared language for continuous improvement. Used poorly, they create perverse incentives, surveillance cultures, and the illusion of progress with none of the substance.

This guide covers what actually matters: which metrics to track, which to abandon, how to avoid the traps that derail most measurement programs, and how to build a system that makes your engineering organization better, not just better at hitting numbers.

If you want to compare this broader measurement model with a more AI-specific lens, start with Engineering Metrics in the AI Era, DORA Metrics Are Not Enough in 2026, and AI Impact.

Table of Contents

- The Fundamental Problem with Engineering Metrics

- Goodhart's Law: The Hidden Trap in Every Metric Program

- Activity vs. Outcome Metrics: The Most Important Distinction

- The Delivery Metrics That Actually Matter

- Quality Metrics: The Guardrails You Cannot Skip

- Team Health Metrics: The Human Signal

- 2026 Industry Benchmarks: What Good Looks Like

- How to Build a Metrics System, Not a Metrics List

- Connecting Engineering Metrics to Business Outcomes

- Common Mistakes and How to Avoid Them

- Frequently Asked Questions

The Fundamental Problem with Engineering Metrics

Only about half of tech managers say their companies even attempt to measure developer productivity, and just a small minority have dedicated specialists for it. The teams that do measure often measure the wrong things, creating a peculiar situation where dashboards look excellent and engineering feels dysfunctional at the same time.

The root cause is confusion between metrics and KPIs. Metrics are descriptive: they capture what is happening. KPIs are interpretive: they help explain whether what is happening is good or bad, and why. Most teams collect metrics. Very few build KPIs.

A commit count is a metric. Whether that commit count reflects meaningful, value-delivering work or an inflated number designed to look productive requires context and pairing with other signals. Engineering leaders who mistake metrics for performance often end up with teams that are excellent at optimizing numbers while delivery outcomes stagnate.

The second problem is the individual versus system confusion. Software development is collaborative. The engineer who spends a day helping three teammates get unstuck may contribute enormously while their commit count is zero. The engineer who writes a thousand lines of code that create technical debt for years may look great on activity metrics. Measuring individual output in a collaborative system produces individual gaming, not team improvement.

The solution begins with a clear mental model: metrics measure motion; KPIs describe direction. And direction can only be understood at the system level, not the individual level.

Goodhart's Law: The Hidden Trap in Every Metric Program

Before choosing any metric, you need to understand the principle that breaks more measurement programs than almost anything else: Goodhart's Law.

Formulated by British economist Charles Goodhart in 1975, the law states simply: "When a measure becomes a target, it ceases to be a good measure."

In engineering, this plays out constantly. Teams rewarded for lines of code write more code than necessary. Teams chasing code coverage targets write tests that technically cover lines but do not verify meaningful behavior. Teams measured on story points inflate estimates until velocity numbers are meaningless for planning.

Here are the three most common Goodhart traps in engineering:

Story point inflation. When velocity becomes a performance target, teams estimate 3 as 5 to look more productive. Cross-team comparisons become meaningless. Sprint planning stops reflecting actual capacity.

Coverage theater. When code coverage becomes a target, tests are written to hit percentages rather than verify behavior. A test that calls a function without asserting results increases coverage while catching nothing.

Deployment frequency gaming. Faster deployments are valuable, but shipping unstable changes to inflate deployment count creates more fire-fighting than value delivery. Speed without paired quality measurement is Goodhart in action.

The solution is not to stop measuring. It is to design a measurement system that is structurally resistant to gaming. The most reliable technique is paired, oppositional metrics. For every speed metric, add a quality guardrail. If you track deployment frequency, also track change failure rate. If you track cycle time, also track post-release defect rate. A team can inflate one signal. Inflating both simultaneously usually requires actually improving the underlying system, which is the point.

Activity vs. Outcome Metrics: The Most Important Distinction

Every software engineering metric falls into one of two categories, and knowing the difference determines whether your measurement program helps or harms.

Activity metrics measure effort and output: lines of code, commit count, PR volume, hours logged, meetings attended, tickets closed. They are easy to collect because they are already captured by your tools. They feel productive to report on because the numbers are always moving.

The problem is that activity metrics can improve without business outcomes improving. Developers can write more code, merge more PRs, and close more tickets while shipping fewer meaningful features, introducing more bugs, and falling further behind on roadmap work. Activity metrics describe motion, not direction.

Outcome metrics measure results: features delivered to customers, defects resolved before reaching production, time saved in the delivery pipeline, reliability improvements experienced by users. They are harder to collect and harder to define, but they describe whether engineering work is actually creating value.

The practical test for distinguishing the two is simple: Can this metric improve without the thing I care about improving? If lines of code rise but shipping velocity stays flat, the metric passed the wrong test. If deployment frequency rises and lead time falls simultaneously, the metric is much more likely to reflect real improvement.

This does not mean activity metrics have no place. PR review time, for example, is an activity signal, but it is also a leading indicator of cycle time and a direct input to developer experience. The rule is not to ban activity metrics. The rule is to avoid using them as standalone KPIs.

The Delivery Metrics That Actually Matter

Delivery metrics measure how efficiently your team moves work from idea to production. These are the closest thing the industry has to a shared standard for engineering performance.

Cycle Time

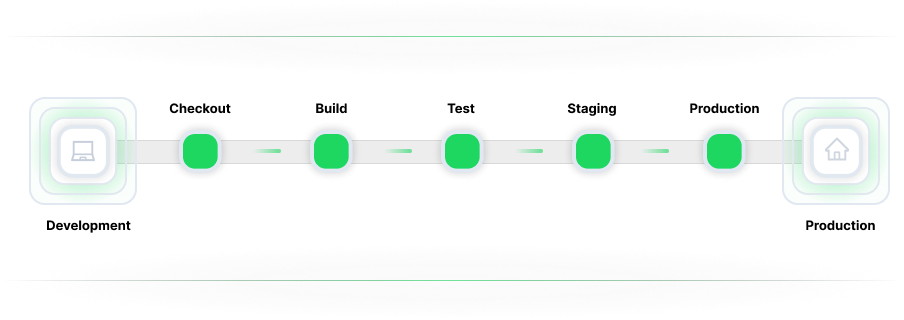

Cycle time measures the end-to-end elapsed time from when a developer starts working on a task to when that work reaches production. It captures coding, review, automated testing, deployment queues, and the deployment step itself.

Cycle time is one of the most useful single delivery metrics because it exposes bottlenecks wherever they actually are, not where you assume they are. A team with fast coding but slow review will still have a long cycle time, which correctly identifies review as the constraint.

If you want a comparative lens for what strong looks like, use Engineering Benchmarks as context rather than as a pass-fail scoreboard.

Deployment Frequency

Deployment frequency measures how often your team ships code to production. High deployment frequency is not valuable by itself; it is a proxy for small batch size, confidence in release processes, and the ability to respond to customer needs quickly.

When deployment frequency is low, look beyond the number. Manual approvals, brittle CI/CD pipelines, insufficient test automation, and deployment anxiety are usually the real issues.

Lead Time for Changes

Lead time measures the time between a code commit and a successful production deployment. It is one of the clearest measures of pipeline speed and responsiveness.

An organization with short lead time can respond to a production bug, customer request, or market opportunity much faster than one with long lead times. That makes lead time not just an engineering metric but a business responsiveness metric.

If you are using DORA metrics, lead time is one of the core signals you should keep, but always interpret it alongside review pressure, quality outcomes, and team capacity.

Quality Metrics: The Guardrails You Cannot Skip

Speed metrics without quality guardrails are how dashboards turn green while engineering falls apart. Every delivery metric needs a paired quality signal.

Change Failure Rate

Change failure rate is the percentage of deployments that cause a degraded service, incident, rollback, or outage. It is the most important companion metric to deployment frequency.

A team that deploys more often while holding change failure rate steady has probably improved. A team that deploys more often while change failure rate rises may simply be shipping instability faster.

Defect Density and Defect Escape Rate

Defect density measures the concentration of defects in a given code volume. More actionable for many teams is defect escape rate, the percentage of issues that reach production rather than being caught in review, testing, or staging.

A rising defect escape rate usually means your quality gates are not keeping up with your delivery velocity. That is especially important in AI-assisted environments, where code output may rise faster than review capacity.

Code Churn Rate

Code churn measures how often recently written code is rewritten or deleted shortly after creation. High churn often points to unclear requirements, rushed design decisions, or code that was never stable in the first place.

It can also be a useful diagnostic when you are trying to explain why throughput looks healthy while delivery confidence keeps dropping.

Mean Time to Recovery

Mean time to recovery measures how quickly your team restores service after a production incident. It reflects operational maturity: monitoring quality, runbooks, rollback capability, and incident response discipline.

If you want a broader operating picture, Symptoms can help frame where quality and delivery friction are showing up before they become chronic.

Team Health Metrics: The Human Signal

Delivery and quality metrics measure what your engineering system produces. Team health metrics measure the people operating inside it. Teams that optimize delivery metrics at the expense of team health consistently underperform over longer time horizons.

Developer Satisfaction and eNPS

Developer satisfaction measures how fulfilled and engaged engineers are with their work, tools, and team environment. Employee Net Promoter Score, or eNPS, measures how likely engineers are to recommend their organization as a place to work.

Strong delivery metrics with weak team health are a warning sign. A team may appear productive while accumulating burnout, distrust in tooling, and avoidable friction.

PR Review Load

PR review time is one of the most common bottlenecks in modern delivery systems. Long review times increase merge conflicts, slow feedback loops, and create costly context switching.

In AI-assisted environments, this problem often gets worse. More generated code can mean more review work for the same senior engineers. That is one reason AI Impact has become an important measurement layer for engineering leaders.

Sprint Predictability

Sprint predictability measures how closely actual delivery matches planned scope. When unplanned work consistently consumes a large share of capacity, it usually points to quality issues, technical debt, operational churn, or poor planning assumptions.

Healthy engineering systems make room for new feature work, maintenance, and rework in a balanced way. Measurement should make that balance visible rather than hiding it under a single velocity number.

2026 Industry Benchmarks: What Good Looks Like

Industry benchmark reports are useful when treated as directional context rather than rigid judgment.

Here are the kinds of ranges leaders often use to calibrate performance:

- Cycle time: elite under 48 hours, median around 83 hours, red flag above 124 hours

- Deployment frequency: elite multiple times per service per day, strong teams around daily, concern when it drops well below that

- PR size: smaller PRs are strongly correlated with better review speed and healthier cycle time

- Lead time: strong teams consistently keep low-risk changes moving quickly

- Change failure rate: elite teams keep it extremely low, strong teams still stay under clear risk thresholds

One critical principle: the goal is not to compare absolute numbers against industry peers as if engineering were a league table. A team improving from a 200-hour cycle time to a 100-hour cycle time has made meaningful progress even if it has not reached elite thresholds. Use benchmarks as directional targets, not pass-fail judgments.

How to Build a Metrics System, Not a Metrics List

The most common failure mode in engineering metrics programs is treating metrics as a list to be collected rather than a system to be designed.

A list of 20 metrics with no relationships between them creates 20 simultaneous optimization pressures, each of which can be gamed independently. A system has structure.

Three principles matter most:

Paired metrics prevent gaming

Every speed metric needs a quality counterpart. Deployment frequency should be paired with change failure rate. Cycle time should be paired with post-release defect rate. PR volume should be paired with PR review load.

Leading indicators enable proactive intervention

DORA-style delivery metrics are often lagging indicators. They tell you what already happened. Leading indicators such as PR size, PR pickup time, build success rate, and developer satisfaction trends tell you what is likely to happen next.

Different audiences need different cadences

A single dashboard for developers, managers, engineering leadership, and executives usually serves none of them well.

A practical structure looks like this:

- Weekly team view: PR cycle time by stage, PR pickup time, build success rate, work in progress

- Monthly leadership view:DORA metrics, developer experience, quality trends, and AI-specific signals where relevant

- Quarterly executive view: engineering performance mapped to roadmap delivery, customer outcomes, incident cost, and investment efficiency

Connecting Engineering Metrics to Business Outcomes

Engineering metrics that live only in engineering dashboards are doing half their job. The highest-leverage use of a mature metrics program is translating technical performance into business language.

Deployment frequency to time-to-market. Faster, safer releases mean customers experience product improvements sooner.

Change failure rate to incident cost. Fewer failed changes mean less engineering time spent on recovery, lower support burden, and less customer disruption.

Developer experience to retention cost. Satisfaction and friction indicators often reveal attrition risk long before headcount loss shows up in finance reporting.

When engineering leaders connect metrics to these business outcomes, measurement stops being a reporting exercise and becomes a decision tool.

Common Mistakes and How to Avoid Them

Mistake 1: Using metrics for individual evaluation

Commits per day, PRs merged, and lines of code almost always measure the wrong thing in a collaborative system. Use metrics for team-level learning and system-level improvement.

Mistake 2: Tracking everything

If everything is a priority, nothing is. Start with a small balanced set covering delivery, quality, and team health. Add more signals only when a specific diagnostic question requires them.

Mistake 3: Ignoring the measurement culture

Metrics introduced without context create surveillance anxiety. Make metrics visible to teams, use them in retrospectives, and frame them as tools for improvement rather than evaluation.

Mistake 4: Comparing teams against each other

Every team operates in a different context. Use metrics for longitudinal improvement within a team, not for simplistic rankings across teams.

Mistake 5: Setting metrics and forgetting them

Metrics become stale as systems evolve. Review your measurement program regularly and ask whether the signals still reflect what matters.

Frequently Asked Questions

What are the most important software engineering metrics?

The most important software engineering metrics usually fall into three groups: delivery metrics such as deployment frequency, lead time, and cycle time; quality metrics such as change failure rate, code churn, and defect escape rate; and team health metrics such as PR review load, developer satisfaction, and predictability.

What is Goodhart's Law and why does it matter for engineering metrics?

Goodhart's Law says that when a measure becomes a target, it stops being a good measure. In engineering, this happens when teams optimize the number rather than the underlying outcome, such as inflating story points or chasing code coverage without improving real quality.

What are vanity metrics in software engineering?

Vanity metrics are numbers that look impressive but do not help explain performance or guide better decisions. Lines of code, raw commit count, and hours logged are common examples when used without context.

What are the DORA metrics and why are they important?

DORA metrics are deployment frequency, lead time for changes, change failure rate, and mean time to recovery. They matter because they provide a widely used evidence-based baseline for delivery performance.

How do you measure developer productivity without creating a surveillance culture?

Measure at the team level, not the individual level. Use metrics to support retrospectives, diagnosis, and improvement. Pair quantitative signals with qualitative team feedback so that delivery gains do not come at the cost of sustainability.

Conclusion

Software engineering metrics are neither a dashboard exercise nor a management surveillance tool. Used with discipline, they are the clearest window an engineering organization has into its own performance: where value is being delivered, where bottlenecks are accumulating, and whether the pace of delivery is sustainable.

The core principles are straightforward:

- measure outcomes, not just activity

- pair speed metrics with quality guardrails

- track team health with the same seriousness as delivery performance

- translate engineering metrics into business language

Start small. Pick one delivery metric, one quality metric, and one team health metric. Establish a baseline. Understand the system. Then improve it.

That sequence, measure, understand, then improve, is what separates organizations that use metrics to get better from those that use metrics to look good.

DORA and Flow Metrics Field Guide

Connect DORA with flow, quality, and planning signals to turn metric trends into practical improvement actions.

Get new engineering intelligence insights by email

If this topic is relevant to your team, submit your email to get practical updates on DORA, AI-assisted development, developer productivity, and SDLC visibility.

Continue Exploring

Written by Emre Dundar

Emre Dundar is the Co-Founder & Chief Product Officer of Oobeya. Before starting Oobeya, he worked as a DevOps and Release Manager at Isbank and Ericsson. He later transitioned to consulting, focusing on SDLC, DevOps, and code quality. Since 2018, he has been dedicated to building Oobeya, helping engineering leaders improve productivity and quality.